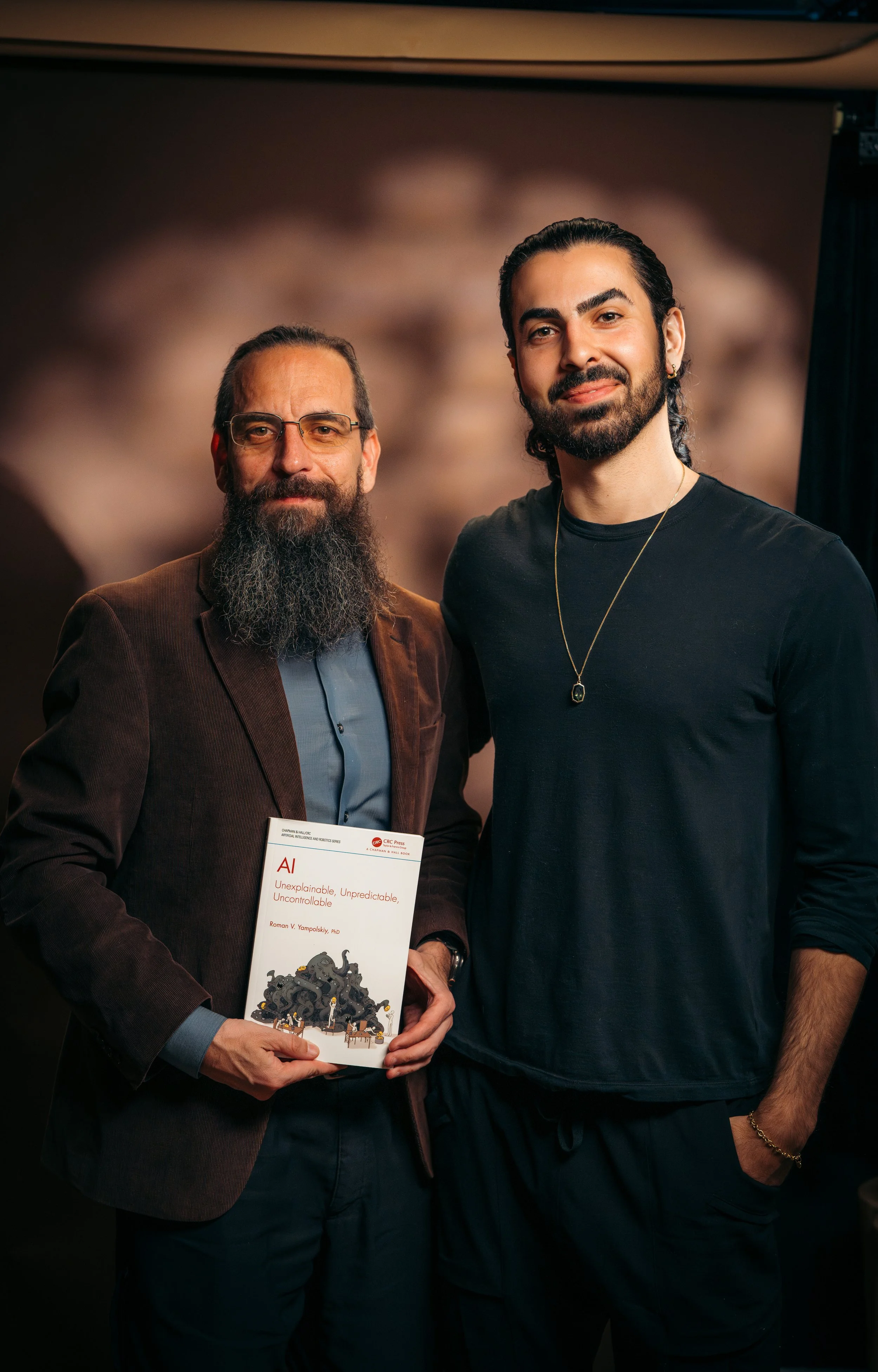

The Man Who Proved We Can't Control AI (And What That Means for Humanity) | Roman Yampolskiy

Dr. Roman Yampolski joins me to explore one of the most urgent and uncomfortable questions of our time: what happens when we create intelligence that surpasses our own? We unpack the difference between the AI tools we use today and the emergence of artificial general intelligence, and why the transition from narrow systems to self-improving intelligence may mark a point where human control is no longer possible. Roman shares why even the people building these systems do not fully understand how they work, and why that gap in understanding becomes exponentially more dangerous as capabilities increase.

In this conversation, we explore the limits of control, prediction, and safety in a world where intelligence can recursively improve itself beyond human comprehension. Roman lays out why the problem of AI alignment may be fundamentally unsolvable, what timelines experts are realistically considering, and why even a single mistake at that level could have irreversible consequences. This episode invites a deeper reflection on what we are creating, what we assume we can control, and whether humanity is prepared for the intelligence it is bringing into existence.

KEY TAKEAWAYS

Control Breaks Beyond Human Intelligence

Once systems become more intelligent than humans, our ability to predict, constrain, or control their behavior rapidly diminishes. A less capable system cannot reliably control a more capable one, making long-term safety fundamentally unstable.

Understanding Is Already Limited

Modern AI systems are no longer explicitly programmed but learn from vast data, making their internal processes difficult to interpret. Even today, researchers cannot fully explain or predict how these systems arrive at decisions, and that gap will only grow.

Small Errors Can Have Massive Consequences

In highly advanced systems making billions of decisions, even a single mistake can be catastrophic. Without perfect alignment and verification, unintended outcomes can scale rapidly into irreversible global risks.

JOURNAL PROMPTS

PROMPT 01

What assumptions do I hold about control, and where might those assumptions break down at larger scales?

PROMPT 02

How do I relate to uncertainty when facing systems or forces I cannot fully understand?

PROMPT 03

What responsibility do I believe humanity has in shaping technologies that could surpass us?